There's one question that changes everything. AI just made ignoring it unforgivable.

I've watched Product teams make the same mistake more times than I can count. Someone gets excited about an idea, the enthusiasm spreads, and suddenly there's an all-hands push to build a new feature.

Other projects get quietly deprioritized. People stay late. Resources get stretched. And then, somewhere after everything ships and the initial excitement dies down, the team finally pauses long enough to look at the data... and realizes the thing they just built isn't something users actually wanted.

The question almost nobody asks — at least not until it's too late — is: should we build this at all?

I was first introduced to a framework for answering that question by JW Kim, who was my manager at Givelify and one of the sharpest product thinkers I've had the privilege of working with. JW pointed me toward a book called The Right It by Alberto Savoia, who was Google's first Engineering Director. The core argument stuck with me immediately: at least 80% of new products fail in the market. Not because they were built poorly, but because they were the wrong thing to build in the first place. Savoia calls it the Law of Market Failure, and it applies to startups and Fortune 500s equally.

What's changed — significantly, and recently — is how cheap and fast it's become to actually do something about it.

The Framework: Pretotyping

Savoia's solution is something he calls pretotyping (not a typo — it comes before prototyping, and the distinction matters).

A prototype tests whether something can be built and how it should work. A pretotype tests something far more fundamental: whether anyone actually wants it. The goal is to answer that question as quickly and cheaply as possible, before a single sprint of real development begins.

The most famous example is one I've repeated in a lot of conversations: Jeff Hawkins, the founder of Palm, carved a wooden block roughly the size of the device he was imagining, drew an interface on a slip of paper, and carried it around for weeks before committing to build anything. He'd pull it out in meetings. Pretend to take notes. Check his "calendar." He wasn't testing functionality — he was testing his own behavior. Would he actually reach for this thing? Would it fit naturally into how he worked?

It did. The Palm Pilot became one of the defining personal devices of its era.

IBM did something similar — and even more theatrical — to test early interest in speech-to-text software before the technology was actually feasible. A human typist, hidden in an adjacent room, transcribed speech in real time while users believed they were interacting with a working AI system. The reactions those users had told IBM everything they needed to know about whether the concept had commercial legs — without building a single line of production code.

Fake it before you make it. Build the smallest possible simulation of the experience, put it in front of real people, and watch what they actually do.

Skin in the Game

Here's the piece of Savoia's framework I find myself thinking about most, because it cuts directly against one of the things teams rely most heavily on: survey data.

Opinions are free. If you ask someone "would you use a feature that does X?" they will almost always say yes, because agreeing costs them nothing. Savoia calls this the "no skin in the game" problem — and it's why focus group results, surveys, and stakeholder enthusiasm are all notoriously unreliable predictors of what people will actually do.

People tell you what they think you want to hear, or what they think they'd want, or just whatever answer gets them out of the conversation fastest.

Useful pretotyping data requires the opposite: someone has to give something up to signal real interest. Time spent engaging with a prototype they could've ignored. An email address. A click-through on a "Sign up" button that doesn't go anywhere yet. Money, in the strongest version of the approach.

The Tesla Model 3 reservation program is the clearest modern example of this I know. In 2016, Tesla didn't survey people about whether they'd consider buying an electric car someday. They asked people to put down a $1,000 deposit on a car that didn't exist yet, that most of them had never seen in person, with delivery estimated more than a year out. Over 325,000 people did it in the first week. That number carried real weight precisely because it required a considered decision rather than a reflexive answer to a question. (Worth noting: the $1,000 deposit was fully refundable, and roughly 23% of those reservations were eventually cancelled. But it's still a far more reliable signal than any survey would've produced.)

A poll asking "do you want an electric car someday?" would have produced a bigger number and told you almost nothing useful.

Savoia calls the good stuff YODA — Your Own Data: collected firsthand, recently, with something at stake. The higher the stakes attached to collecting it, the more you can trust it. And critically, it doesn't have to be money. Getting someone to spend 20 minutes with a prototype they could've declined to engage with? That's real data too.

I've Actually Done This

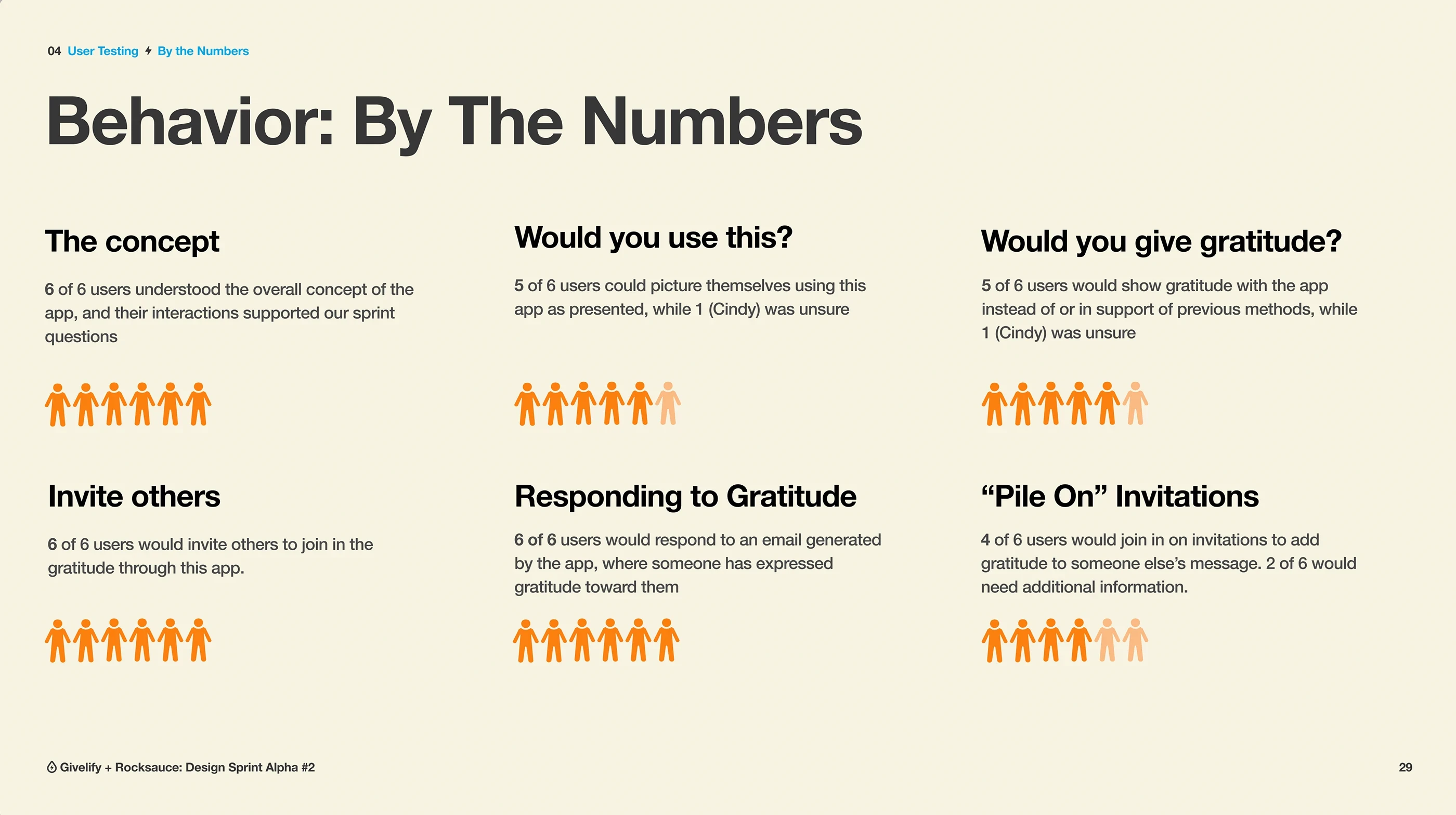

JW was also the person who initiated our collaboration with Rocksauce Studios at Givelify, and in the span of about six weeks, our team ran three rapid pretotyping sprint cycles with them, back to back. Each one tackled a distinct product question. Could a "giving circles" feature make collective giving feel more like community than transaction? Would users genuinely engage with a dedicated gratitude experience tied to the donation flow? What would it take to build meaningful event and fundraising tools for faith organizations?

Each sprint followed the same structure: two days of cross-functional workshop to define the challenge and align on what we were actually trying to learn, followed by a week building a Figma prototype realistic enough to feel like a real app, then live user testing sessions with people who matched our actual audience.

The findings were granular in a way that stakeholder opinions never are. One sprint surfaced that a bowl metaphor for collecting gratitude messages was instantly clear to users with a Sunday School background and completely opaque to everyone else. Another found that "Seek Encouragement" as a label confused four out of six testers badly enough that they couldn't accurately describe what the feature did.

You don't get that kind of specific, behavioral data from a meeting. You get it by watching a real person interact with a real thing — even when that real thing is mostly smoke and mirrors.

What I remember most clearly about that process, though, wasn't the findings. It was how much work it took just to get a prototype to a fidelity where users could genuinely suspend disbelief. Good Figma prototyping is skilled work. Getting the flows right, making the interactions feel plausible, keeping the illusion intact when someone taps somewhere unexpected — it's non-trivial. We had talented people and a dedicated external partner, and it still consumed a significant chunk of each sprint cycle just to produce the artifact we needed to test.

That constraint mattered. It put a practical ceiling on how often, and how affordably, any team could realistically run this kind of validation cycle.

That ceiling is gone. The walls might still be there — the process, the rigor, the need to actually test something real — but the space above them? Wide open.

What AI Just Changed

This is the part of the conversation that I don't think has fully landed yet in most organizations… and it should.

Tools like Figma Make, Vercel's v0, Cursor, and Claude have compressed the time it takes to go from "here's an idea" to "here's a functional, interactive thing you can put in front of users" from weeks to hours.

Not a click-through simulation. Not a polished mockup. An actual working application — with real logic, real content, real behavior — that a user can genuinely interact with and respond to honestly.

The fidelity difference matters enormously for the quality of what you learn. A Figma prototype that looks like an app tells you something. A working prototype that behaves like an app tells you something far more useful, because users aren't unconsciously compensating for the gap between simulation and reality. They're just using a thing. And reacting to it.

I've built two tools this way myself — an AI-powered out-of-office message generator and a custom integration assessment tool that generates personalized recommendations via Claude's API. Both started as product questions I needed to validate: would people actually engage with this? Would it work the way I imagined? Both went from concept to testable, functional experience in days. The assessment tool specifically answered a question that had been sitting on my mind for months at Celigo: would prospects complete a detailed technical intake form if it promised something genuinely valuable in return? We suddenly had the ability to find out fast, because we had something real to put in front of them.

That's pretotyping. With AI, it's pretotyping that almost any motivated person on your team can now do, without a specialized design partner, without a multi-week sprint cycle, and without a development budget.

So Why Isn't Everyone Doing This?

Honestly, I think most companies just haven't connected these dots yet. The AI conversation has largely been about productivity and automation — doing existing work faster. The pretotyping conversation has largely lived inside product and UX circles. They haven't met in the middle.

When they do, the implication is significant: the biggest excuse for not validating ideas before building them — the cost, the time, the resources required to produce something testable — has been largely removed. What used to require a dedicated sprint cycle, a design partner, and several weeks of skilled work can now happen in an afternoon, with tools that are widely available and increasingly accessible to non-technical teams.

The 80% failure rate Savoia documented isn't a fixed law of nature. It's what happens when organizations consistently skip the step of asking should we build this? before asking how do we build this?

That step just got dramatically cheaper. The question is whether your team is taking advantage of it.

I'm currently seeking Director/VP-level creative leadership roles at established tech/SaaS companies. My background includes:

Brand Transformation: Led award-winning rebrand at Celigo (GDUSA, Gold ADDY recognition) that saved $500K+ on a single project

Creative Operations: Built systems that increased team output 238% while maintaining quality

Strategic Innovation: Developed AI-powered tools and data-informed processes that connect creative excellence to measurable business impact

View my portfolio or connect with me on LinkedIn if you'd like to chat about creative leadership, operational excellence, or how to build more research-informed creative teams.